You’ve got a Raspberry Pi collecting dust, a power-efficient little server, and an OpenClaw model you want running 24/7 without a cloud bill. The question isn’t *if* it’s possible to get OpenClaw on a Pi, it’s *how well* it performs and what compromises you’ll inevitably make. The appeal is obvious: local, private, and cheap inference. But the reality, particularly with larger models, quickly deviates from the dream.

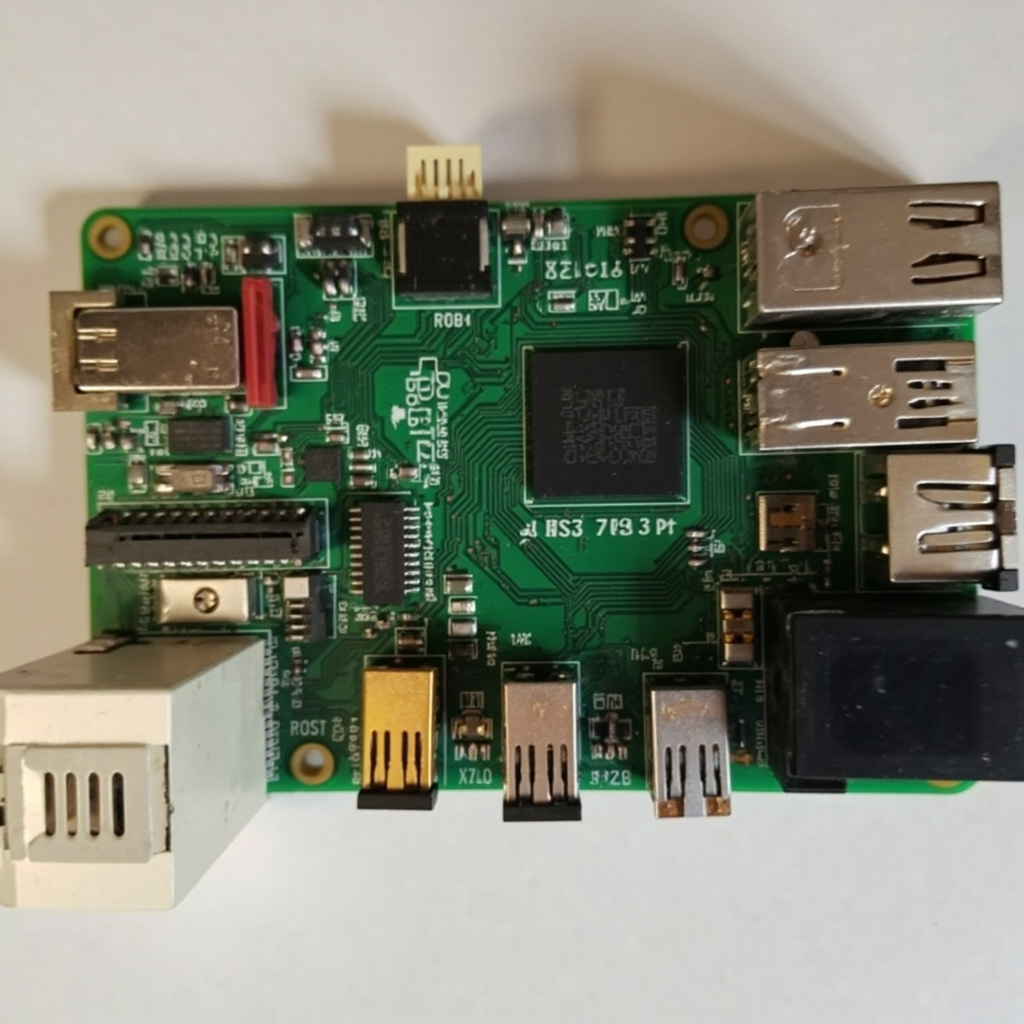

The core challenge isn’t installation. Getting OpenClaw itself onto a Pi is straightforward, largely a matter of building from source or finding pre-compiled binaries if you’re targeting a common architecture like ARM64. Assuming you’re running a modern Pi 4 or 5, you’ll want to use a 64-bit OS like Raspberry Pi OS (64-bit). The command line for building OpenClaw is pretty standard: cmake -B build -DOPENCLAW_BUILD_TESTS=OFF -DOPENCLAW_BUILD_EXAMPLES=OFF && cmake --build build --config Release. The real bottleneck emerges when you try to load any non-trivial model. Your Pi’s RAM (especially if you’re on 4GB or less) becomes the immediate limiting factor. Even a quantized 7B model often pushes the limits, leaving little headroom for the OS or other processes.

The non-obvious insight comes not just from RAM, but from the thermal throttling and I/O performance. While you *can* load a 7B Q4_K_M model onto an 8GB Pi 4, don’t expect real-time responses for complex prompts. The CPU on the Pi, even with active cooling, will quickly hit its thermal limits during sustained inference. What looks like a memory bottleneck might actually be a CPU one, or even an SD card I/O bottleneck during model loading. If you’re using a low-quality SD card, the time it takes to load the model into RAM can be agonizingly long, leading you to believe the model is too big when it’s just slow storage. For any serious use, an NVMe drive on a Pi 5 is almost a requirement, dramatically improving model load times and overall system responsiveness.

So, does it work? Yes, for smaller, highly quantized models (e.g., 3B, or even heavily pruned 7B models) and simple prompts where latency isn’t critical. For anything beyond basic text generation or simple summarization, the Pi quickly shows its limitations. It’s an excellent platform for learning and experimenting with OpenClaw, understanding the fundamentals of local inference, and testing small-scale applications. But for production-level AI assistants that require speed and robustness, you’ll quickly outgrow its capabilities.

To start experimenting, download a tiny OpenClaw-compatible model like TinyLlama-1.1B-Chat-v1.0.Q4_K_M.gguf and try running it locally.

“`

Related: How to Set Up OpenClaw on a Raspberry Pi

Related: OpenClaw Review: AI-Powered Home Automation That Actually Works

Frequently Asked Questions

Can OpenClaw be successfully run on a Raspberry Pi?

Yes, the article confirms it can work, but often requires specific configurations and managing performance expectations. It’s not always an out-of-the-box experience, but with effort, it’s achievable.

What are the main challenges when running OpenClaw on Raspberry Pi?

Key challenges include managing the Pi’s limited processing power and memory, ensuring driver compatibility, and optimizing OpenClaw settings. Performance can vary significantly based on the Pi model and workload.

Which Raspberry Pi models are best suited for running OpenClaw?

Newer, more powerful models like the Raspberry Pi 4 or 5 are generally recommended due to their improved CPU, RAM, and GPU capabilities. Older models might struggle significantly with performance.

Leave a Reply